Problem: Train a physics-informed neural network (PINN) on both the van der Pol oscillator and the drift-free Fokker-Planck diffusion equation.

Solution:

Spectral Bias of Physics-Informed Neural Networks¶

1. Introduction and Background¶

The physics-informed neural network (PINN) paradigm is a form of unsupervised machine learning first proposed in Raissi et al. (2019). At inference time, one freezes the model’s internal parameters and queries the PINN at an arbitrary point of the relevant domain (typically some spacetime event $(\mathbf{x}, t)$). The PINN outputs its prediction $\hat{\phi}(x,t)$ for the actual value $\phi(x,t)$ of the PDE solution at that input point, for some fixed initial/boundary conditions and constant parameters in the PDE.

This motivates the training stage to be based off an MSE cost function that simultaneously enforces both the initial/boundary conditions and the PDE constraint itself. At training time, one randomly samples a batch of $N_{\partial}$ points on the boundary and $N_{\text{int}}$ points in the interior of the domain. For each point, the PINN prediction $\hat{\phi}(x_i, t_i)$ is computed, and automatic differentiation (the same chain rule algorithm that powers backpropagation) is applied to obtain the PINN’s predictions for the relevant partial derivatives $\partial\phi/\partial x, \partial\phi/\partial t, \partial^2\phi/\partial x^2, \ldots$ as dictated by the PDE, which is assumed to take the kernelized form $\text{PDE}(\phi(x,t))=0$. The total cost function is then:

$$C(\theta) = \frac{\lambda_{\partial}}{2N_{\partial}}\sum_{i=1}^{N_{\partial}}(\hat{\phi}_{\theta}(x_i,t_i) – \phi(x_i,t_i))^2 + \frac{\lambda_{\text{int}}}{2N_{\text{int}}}\sum_{i=1}^{N_{\text{int}}}\text{PDE}^2(\hat{\phi}_{\theta}(x_i,t_i))$$

The parameters $\lambda_{\partial}, \lambda_{\text{int}}$ are hyperparameters meant to emphasize one component of the loss more heavily (e.g. to prevent pathological underfitting such as $\hat{\phi}=0$, one should choose $\lambda_{\partial} \gg \lambda_{\text{int}}$).

In this project, I explore and quantify the difficulties that PINNs have at modelling high-frequency spatial and temporal Fourier components of PDE solutions, following work of Wang et al. (2021) which in turn follows from pioneering early work by Rahaman et al. (2018) and Xu et al. (2019) on the spectral bias and frequency principle of deep neural networks outside the specialized context of PINNs. It is hypothesized that the smoothness of the activation function (which in the experiments below are always $\tanh$ activations) could induce a smoothness prior on the PINNs responsible for this spectral bias.

The project is split into two experiments:

- Van der Pol oscillator (ODE): probing spectral bias in the time domain via relaxation oscillations.

- Fokker-Planck equation (PDE): probing spectral bias in the spatial domain via increasingly narrow initial conditions.

2. Algorithms and Implementation¶

2.1 Network Architecture¶

Both experiments use the same core architecture: a fully-connected multilayer perceptron (MLP) with 5 hidden layers of 64 neurons each, $\tanh$ activations, and Xavier normal weight initialization. Xavier initialization draws initial weights from $\mathcal{N}(0, \sigma^2)$ with $\sigma^2 = 2/(n_{\text{in}} + n_{\text{out}})$, which is the optimal scaling for networks with symmetric, saturating activations like $\tanh$, as it ensures the initial inputs to each activation fall into the active linear region, avoiding vanishing or exploding gradients at the start of training.

For the van der Pol experiment, the input is 1-dimensional ($t \mapsto \hat{x}(t)$). For the Fokker-Planck experiment, the input is 2-dimensional ($(x,t) \mapsto \hat{p}(x,t)$).

2.2 Training¶

All models were trained with the Adam optimizer using a learning rate schedule consisting of a linear warmup phase followed by cosine annealing decay. Collocation points were re-sampled uniformly at random each epoch.

2.3 Hardware¶

All training was performed on a single NVIDIA GPU via PyTorch with CUDA. Each van der Pol model (15,000 epochs) took roughly 1-2 minutes; each Fokker-Planck model (15,000 epochs) took roughly 3-4 minutes.

3. Experiment 1: Van der Pol Oscillator¶

3.1 Problem Setup¶

The van der Pol equation is a second-order stiff nonlinear ODE first proposed by electrical engineer Balthasar van der Pol as a model of the voltage $x(t)$ in certain vacuum tube circuits:

$$\ddot{x} + \mu(x^2 – 1)\dot{x} + x = 0$$

For $\mu = 0$ this reduces to the simple harmonic oscillator $\ddot{x} + x = 0$. As $\mu$ increases, the system develops a stable limit cycle that becomes increasingly contorted in $(x, \dot{x})$ phase space, leading to relaxation oscillations with sharp, high-frequency transitions. The period grows asymptotically as $T \sim (3 – 2\ln 2)\mu$ for $\mu \gg 1$.

I first learned about this dynamical system in high school while reading Strogatz’s textbook on Chaos and Nonlinear Dynamics. Upon doing initial research into PINNs, I learned that they had a known deficiency with respect to high-frequency signals, and upon searching for a simple toy ODE to illustrate this, I recalled the van der Pol oscillator and how its relaxation oscillations could serve as a natural probe of the spectral bias.

In all experiments, I used the initial conditions $x(0) = 1$, $\dot{x}(0) = 0$, and varied the damping coefficient $\mu = 0, 0.1, 0.2, \ldots, 1.0, 2.0, \ldots, 10.0$. Training from scratch with Xavier initialization, only the $\mu = 0$ PINN was able to learn the sinusoidal SHM solution. To address this, I adopted a curriculum training strategy: the $\mu = 0$ checkpoint was used to initialize the $\mu = 0.1$ PINN, whose checkpoint in turn initialized the $\mu = 0.2$ PINN, and so forth.

import sys, os

import numpy as np

import matplotlib.pyplot as plt

import torch

# Add module directories to path

sys.path.insert(0, os.path.join(os.getcwd(), 'vanderpol_PINN'))

sys.path.insert(0, os.path.join(os.getcwd(), 'fokkerplanck_PINN'))

from vanderpol_PINN.model import VanillaPINN

from vanderpol_PINN.vanderpol_data import generate_reference_solution

from fokkerplanck_PINN.model import VanillaPINN2D

from fokkerplanck_PINN.fokkerplanck_data import analytical_fokker_planck

DEVICE = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

print(f"Using device: {DEVICE}")

Using device: cuda

3.2 Results: Time Series and Phase Portraits¶

The figures below show the curriculum training results for $\mu = 0$ through $\mu = 6$. The left column compares the PINN prediction (blue dashed) against the reference Radau solution (black solid), alongside the training loss curve. The right column shows the corresponding phase portrait $(x, \dot{x})$ with the nullclines of the dynamical system and a background vector field.

Even with the curriculum training approach, by about $\mu \approx 5$ the PINN prediction starts deviating from the Radau solution. This is evidence for spectral bias in the time domain: the PINN cannot represent the sharp, high-frequency transitions of the relaxation oscillations.

def evaluate_pinn_with_derivatives(model, t_eval):

# Evaluate a trained PINN, returning x(t) and x_dot(t) via autodiff.

model.eval()

t_tensor = torch.tensor(t_eval, dtype=torch.float32, device=DEVICE).unsqueeze(1)

t_tensor.requires_grad_(True)

x_pred = model(t_tensor)

x_dot_pred = torch.autograd.grad(

x_pred, t_tensor, grad_outputs=torch.ones_like(x_pred), create_graph=False

)[0]

return (x_pred.detach().cpu().numpy().flatten(),

x_dot_pred.detach().cpu().numpy().flatten())

def plot_vdp_comparison(mu_values, ckpt_dir, T_DOMAIN=17.0):

# Load checkpoints and plot time series + phase portraits side by side.

for mu in mu_values:

mu_str = f"{mu}".replace('.', 'p')

ckpt_path = os.path.join(ckpt_dir, f'mu_{mu}_vanilla_mu_{mu_str}.pt')

if not os.path.exists(ckpt_path):

print(f"Checkpoint not found: {ckpt_path}, skipping.")

continue

checkpoint = torch.load(ckpt_path, map_location=DEVICE, weights_only=False)

model = VanillaPINN()

model.load_state_dict(checkpoint['model_state_dict'])

model.to(DEVICE)

# Reference solution

t_ref, x_ref, xdot_ref = generate_reference_solution(mu, t_span=(0, T_DOMAIN), num_points=2000)

# PINN prediction

x_pinn, xdot_pinn = evaluate_pinn_with_derivatives(model, t_ref)

# Training loss history

loss_hist = checkpoint.get('loss_history', None)

fig, axes = plt.subplots(1, 2, figsize=(14, 4.5))

# --- Time series ---

ax = axes[0]

ax.plot(t_ref, x_ref, 'k-', lw=2, label='Reference (Radau)')

ax.plot(t_ref, x_pinn, 'b--', lw=1.5, label='Vanilla PINN', alpha=0.8)

ax.set_xlabel('$t$'); ax.set_ylabel('$x(t)$')

ax.set_title(f'Time Series, $\\mu = {mu}$')

ax.legend(fontsize=9); ax.grid(True, alpha=0.3)

# Inset for loss curve if available

if loss_hist is not None:

ax_inset = ax.inset_axes([0.55, 0.55, 0.4, 0.4])

losses = loss_hist if isinstance(loss_hist, list) else loss_hist.get('total', loss_hist)

ax_inset.semilogy(losses, 'r-', lw=0.5)

ax_inset.set_xlabel('Epoch', fontsize=7); ax_inset.set_ylabel('Loss', fontsize=7)

ax_inset.tick_params(labelsize=6)

# --- Phase portrait ---

ax = axes[1]

# Nullcline: x_dot = -x / (mu*(x^2 - 1))

if mu > 0:

x_nc = np.linspace(-3, 3, 500)

nc_color = '#2ca02c'

for mask in [x_nc < -1, (x_nc > -1) & (x_nc < 1), x_nc > 1]:

seg = x_nc[mask]

if len(seg) > 0:

ax.plot(seg, -seg / (mu * (seg**2 - 1)), color=nc_color,

ls='--', lw=1.2, alpha=0.6)

ax.plot(x_ref, xdot_ref, 'k-', lw=2, label='Reference (Radau)')

ax.plot(x_pinn, xdot_pinn, 'b--', lw=1.5, label='Vanilla PINN', alpha=0.8)

ax.plot(x_ref[0], xdot_ref[0], 'ro', ms=7, label='IC')

ax.set_xlabel('$x$'); ax.set_ylabel('$\\dot{x}$')

ax.set_title(f'Phase Portrait, $\\mu = {mu}$')

ax.legend(fontsize=8); ax.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

# Plot van der Pol results for the curriculum training experiment

mu_curriculum = [0.0, 0.5, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0]

plot_vdp_comparison(mu_curriculum, 'vanderpol_PINN/checkpoints')

c:\Users\weidu\AppData\Local\Programs\Python\Python313\Lib\site-packages\torch\autograd\graph.py:823: UserWarning: Attempting to run cuBLAS, but there was no current CUDA context! Attempting to set the primary context... (Triggered internally at C:\actions-runner\_work\pytorch\pytorch\pytorch\aten\src\ATen\cuda\CublasHandlePool.cpp:180.) return Variable._execution_engine.run_backward( # Calls into the C++ engine to run the backward pass

4. Experiment 2: Fokker-Planck Equation¶

4.1 Problem Setup¶

The second experiment steps up in complexity from an ODE to a PDE. We consider the drift-free Fokker-Planck equation (i.e. the diffusion/heat equation) for a probability distribution $p(x,t)$ in one spatial dimension:

$$\frac{\partial p}{\partial t} = D \frac{\partial^2 p}{\partial x^2}$$

for diffusion constant $D$. When the initial condition is an infinite spike at the origin, $p(x,0) = \delta(x)$, the analytical solution (the heat kernel/Green’s function) is a Gaussian whose width grows with variance $2Dt$:

$$p(x,t) = \frac{1}{\sqrt{4\pi D t}} \exp\!\left(-\frac{x^2}{4Dt}\right)$$

Because a $\delta$-function is a high-frequency spike (its Fourier transform $\int_{-\infty}^{\infty}dx\, e^{-ikx}\delta(x) = 1$ contains all spatial frequencies uniformly), PINNs should have difficulty modelling it. To quantify when this failure occurs, we set $D = 1$ and initialize the PINN with $p(x,0) = \frac{1}{\sqrt{4\pi t_0}}\exp(-x^2/4t_0)$ for some $t_0 > 0$. As $t_0 \to 0$, the initial condition approaches $\delta(x)$ and should become harder for the PINN to represent.

We truncate the spatial domain to $x \in [-L, L]$ with $L = 10$ and impose Dirichlet boundary conditions $p(\pm L, t) = 0$, which is a reasonable approximation since the Gaussian tails decay exponentially. The PINN architecture is the same MLP as before but with a 2-dimensional input $(x, t)$: five hidden layers of 64 neurons each with $\tanh$ activations and Xavier normal initialization.

4.2 Cost Function¶

The cost function has three terms:

$$C(\theta) = \frac{1}{N_{\text{PDE}}}\sum_{i=1}^{N_{\text{PDE}}}\!\left(\frac{\partial\hat{p}_\theta}{\partial t} – D\frac{\partial^2\hat{p}_\theta}{\partial x^2}\right)^{\!2}_{\!(x_i,t_i)} + \frac{\lambda_{\text{IC}}}{N_{\text{IC}}}\sum_{j=1}^{N_{\text{IC}}}\!\left(\hat{p}_\theta(x_j,0) – p(x_j,t_0)\right)^{\!2} + \frac{\lambda_{\text{BC}}}{N_{\text{BC}}}\sum_{k=1}^{N_{\text{BC}}}\hat{p}_\theta(\pm L, t_k)^2$$

with $N_{\text{PDE}} = 2500$, $N_{\text{IC}} = 1000$, $N_{\text{BC}} = 1000$, $\lambda_{\text{IC}} = 10$ (set higher to prevent the trivial $\hat{p} \equiv 0$ solution from dominating), and $\lambda_{\text{BC}} = 1$. All collocation points are re-sampled uniformly at random each epoch. The model was trained for $t_0 \in \{1.0, 0.1, 0.05, 0.01, 0.001, 0.0001\}$, with 15,000 epochs per run.

4.3 Results¶

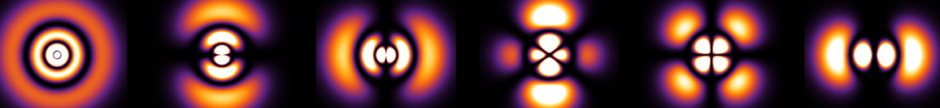

The figures below show the PINN’s predicted profile $\hat{p}(x,t)$ (blue dashed) compared against the analytical Green’s function (black solid) at six time snapshots $t = 0, 0.2, 0.4, 0.6, 0.8, 1.0$, for decreasing values of $t_0$.

As $t_0$ decreases, the initial condition sharpens into a narrower and taller spike, whose Fourier spectrum becomes progressively more broadband (in the limit $t_0 \to 0$, the spectrum is flat since $\hat{\delta}(k) = 1$). This is where the spectral bias of standard MLPs should manifest most clearly.

For $t_0 = 1.0$ and $t_0 = 0.1$, the PINN achieves near-perfect agreement with the analytical solution at all time snapshots, since the initial Gaussian is broad and smooth (dominated by low spatial frequencies that the PINN can represent without difficulty). By $t_0 = 0.01$, the initial peak is sharp enough that small discrepancies appear in the $t = 0$ panel, though the PINN still captures the subsequent diffusive broadening reasonably well. The failure becomes catastrophic at $t_0 = 0.001$ and $t_0 = 0.0001$: the PINN cannot represent the near-$\delta$-function initial condition at all, and this failure propagates forward in time, producing profiles that are asymmetric, too broad, and shifted away from the origin. The PINN’s predictions at later times ($t = 0.6, 0.8, 1.0$) remain wrong even though the analytical solution has by then diffused into a smooth, low-frequency Gaussian. This suggests that spatial spectral bias corrupts the PINN’s internal representation of the solution globally, not just at the initial time.

def plot_fokkerplanck_results(t0_values, ckpt_dir, t_snapshots, D=1.0, x_range=(-10, 10)):

# Load FP checkpoints and plot PINN vs analytical at multiple time snapshots.

for t0 in t0_values:

t0_str = str(t0).replace('.', 'p')

ckpt_path = os.path.join(ckpt_dir, f'fp_t0_{t0_str}.pt')

if not os.path.exists(ckpt_path):

print(f"Checkpoint not found: {ckpt_path}, skipping.")

continue

checkpoint = torch.load(ckpt_path, map_location=DEVICE, weights_only=False)

model = VanillaPINN2D()

model.load_state_dict(checkpoint['model_state_dict'])

model.to(DEVICE)

model.eval()

x = np.linspace(x_range[0], x_range[1], 500)

n_snaps = len(t_snapshots)

fig, axs = plt.subplots(2, 3, figsize=(16, 9))

axs = axs.flatten()

for i, t in enumerate(t_snapshots):

ax = axs[i]

exact_p = analytical_fokker_planck(x, t + t0, D)

xt_grid = np.stack([x, np.full_like(x, t)], axis=1)

xt_tensor = torch.tensor(xt_grid, dtype=torch.float32, device=DEVICE)

with torch.no_grad():

p_pred = model(xt_tensor).cpu().numpy().flatten()

ax.plot(x, exact_p, 'k-', lw=2, label='Analytical')

ax.plot(x, p_pred, 'b--', lw=1.5, label='Vanilla PINN')

ax.set_title(f'$t = {t}$', fontsize=13)

ax.set_xlabel('$x$')

if i % 3 == 0:

ax.set_ylabel('$p(x, t)$')

ax.legend(fontsize=9)

ax.grid(True, alpha=0.3)

fig.suptitle(f'Fokker-Planck: PINN vs Analytical ($t_0 = {t0}$, $D = {D}$)',

fontsize=14, fontweight='bold')

plt.tight_layout()

plt.show()

# Plot Fokker-Planck results for all t0 values

t0_values = [1.0, 0.1, 0.05, 0.01, 0.001, 0.0001]

t_snapshots = [0.0, 0.2, 0.4, 0.6, 0.8, 1.0]

plot_fokkerplanck_results(t0_values, 'fokkerplanck_PINN/checkpoints', t_snapshots)

5. Discussion and Extensions¶

These two experiments probe spectral bias along complementary axes. The van der Pol experiment demonstrates bias in the temporal domain: as $\mu$ increases, the solution $x(t)$ develops sharp transitions in time that the PINN cannot capture. The Fokker-Planck experiment demonstrates bias in the spatial domain: as $t_0$ decreases, the initial condition $p(x, 0)$ develops sharp spatial structure that the PINN cannot represent. In both cases, the fundamental issue is the same: standard MLPs with smooth activation functions tend to learn low-frequency components of the target function first and struggle with high-frequency components.

Several extensions were considered but not implemented:

- Parametric PINN for the van der Pol oscillator: rather than training a separate PINN for each $\mu$, one could train a single network that accepts $(t, x(0), \dot{x}(0), \mu)$ as inputs, learning the entire family of oscillators at once.

- Transfer learning: following Mustajab et al. (2024), one could first train a PINN on low-frequency solutions and then transfer the weights to better learn higher-frequency solutions.

- Normalization penalty for Fokker-Planck: although a normalized initial distribution $\int dx\, p(x,0) = 1$ implies normalization for all subsequent $t$ (provided the probability current vanishes sufficiently fast at the boundaries), for the smaller values of $t_0$ the PINN predictions were no longer even normalized. One way to address this would be to incorporate an explicit normalization penalty proportional to $(1 – \int dx\, p(x,t))^2$ into the cost function, estimated via Monte Carlo integration over the sampled interior points.

- Fourier transform penalties: one could add terms to the cost function that penalize discrepancies in the Fourier domain, particularly at high frequencies (e.g. via a Wasserstein distance).

References¶

- Raissi, M., Perdikaris, P. and Karniadakis, G.E. (2019). Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations. Journal of Computational Physics, 378, pp.686-707.

- Wang, S., Teng, Y. and Perdikaris, P. (2021). Understanding and mitigating gradient flow pathologies in physics-informed neural networks. SIAM Journal on Scientific Computing, 43(5), pp.A3055-A3081.

- Rahaman, N. et al. (2018). On the spectral bias of neural networks. ICML 2019.

- Xu, Z.J. et al. (2019). Frequency principle: Fourier analysis sheds light on deep neural networks. Communications in Computational Physics, 28(5), pp.1746-1767.

- Karniadakis, G.E. et al. (2021). Physics-informed machine learning. Nature Reviews Physics, 3(6), pp.422-440.

- Mustajab, A.H. et al. (2024). Transfer learning enhanced physics informed neural network for phase-field modeling of fracture. Theoretical and Applied Fracture Mechanics, 130, p.104321.